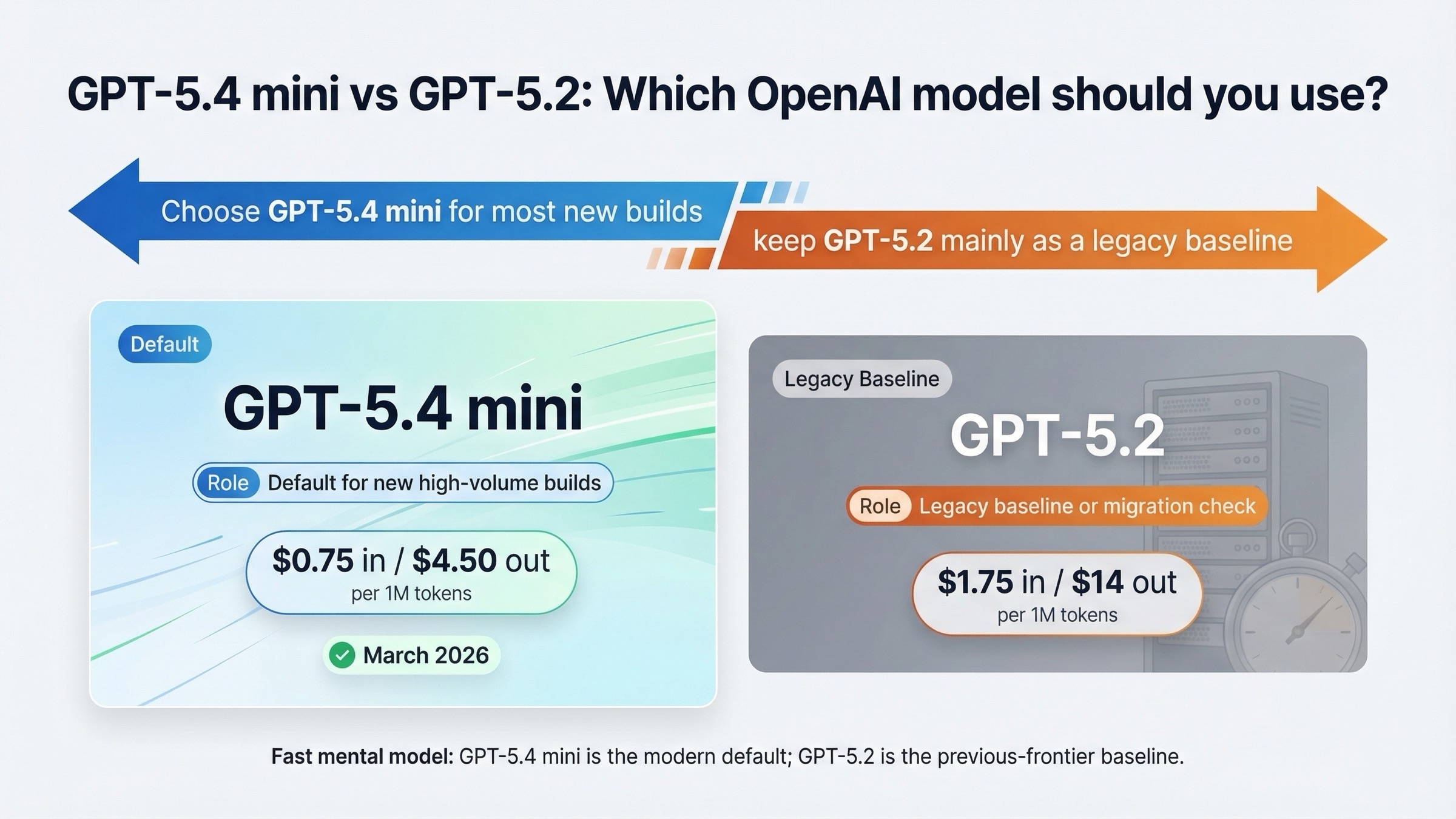

Short answer: as of March 21, 2026, most new API builds should choose GPT-5.4 mini, not GPT-5.2. OpenAI's current latest model guide says GPT-5.4 replaced GPT-5.2 in the API, while GPT-5.4 mini is the smaller, faster variant OpenAI recommends for high-volume coding, computer use, and agent workflows that still need strong reasoning.

That does not mean GPT-5.2 has zero value. It still matters as a legacy baseline, a migration checkpoint, or a way to validate how an older production path behaves before you switch it over. But if your real question is "what should I start with for a new product in 2026?", GPT-5.4 mini is usually the safer answer. And if what you actually want is the modern successor to GPT-5.2's deeper frontier lane, the better model is GPT-5.4, not GPT-5.4 mini.

This guide is based on official OpenAI model pages, launch posts, and Help Center articles opened and checked on March 21, 2026. It also keeps one important constraint in view: OpenAI has not published a clean official benchmark table that directly pits GPT-5.4 mini against GPT-5.2, so this article is a routing guide, not a fake head-to-head bakeoff.

TL;DR

- Choose GPT-5.4 mini for most new high-volume coding, computer-use, and agent workflows.

- Keep GPT-5.2 only as a legacy baseline, migration checkpoint, or validation path.

- Choose GPT-5.4 instead if what you actually need is the current frontier default for important work.

Quick comparison: GPT-5.4 mini vs GPT-5.2

| Category | GPT-5.4 mini | GPT-5.2 | What it means in practice |

|---|---|---|---|

| Current official role | Small fast GPT-5.4 branch for high-volume coding, computer use, and agents | Previous frontier model for professional work | These are not the same lane in OpenAI's current lineup |

| Best for | New high-volume coding assistants, subagents, tool-heavy flows, cost-sensitive agent loops | Legacy baselines, migration checks, older prompt-path validation | Most new builds should start on GPT-5.4 mini |

| Input price | $0.75 / 1M | $1.75 / 1M | GPT-5.4 mini is much cheaper on input |

| Cached input | $0.075 / 1M | $0.175 / 1M | GPT-5.4 mini is also much cheaper for repeat-heavy traffic |

| Output price | $4.50 / 1M | $14.00 / 1M | GPT-5.2 is dramatically more expensive on output |

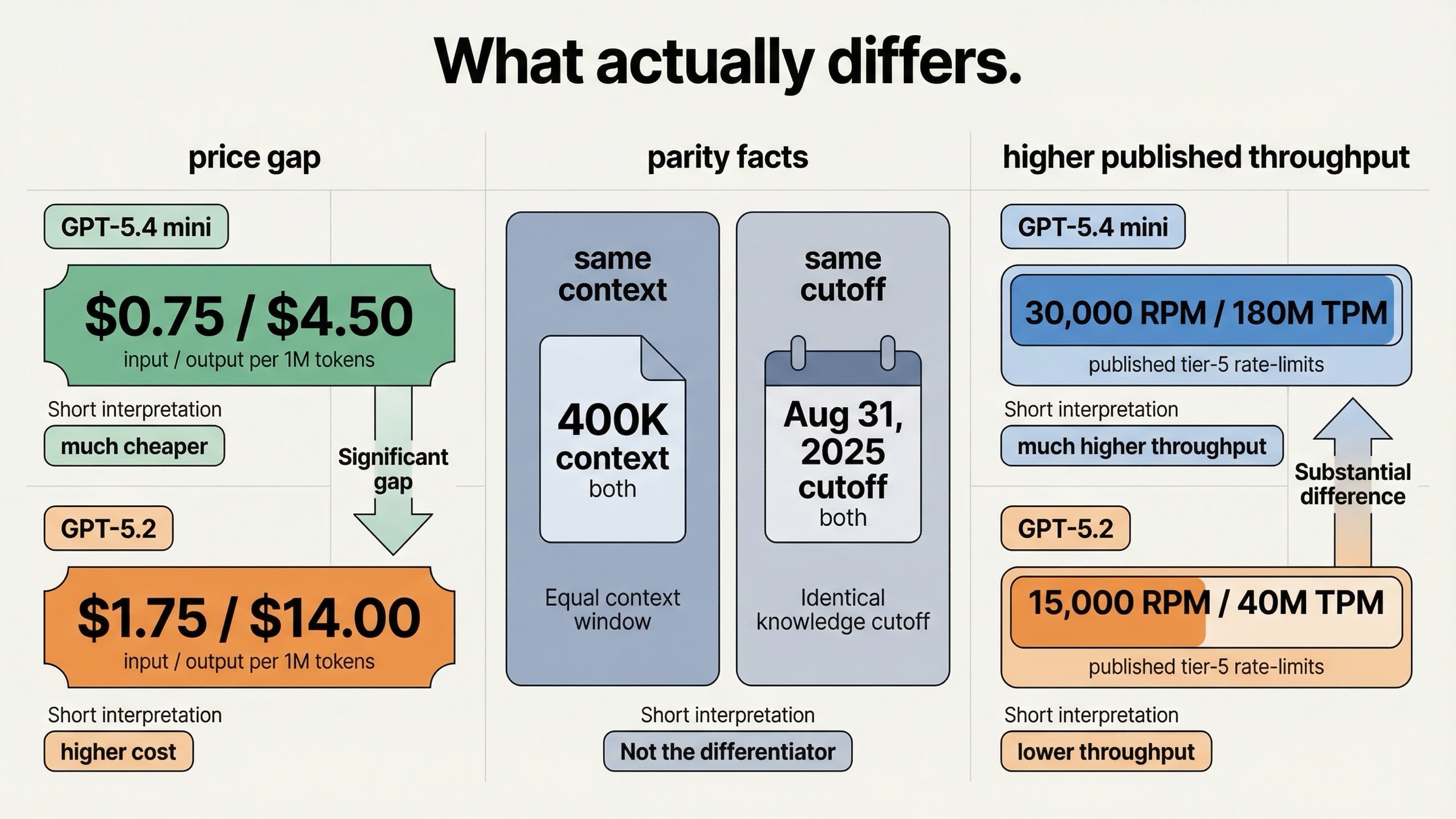

| Context window | 400K | 400K | No context advantage for GPT-5.2 |

| Max output | 128K | 128K | Tie |

| Knowledge cutoff | Aug 31, 2025 | Aug 31, 2025 | GPT-5.4 mini is not fresher here |

| Published Tier 5 limits | 30,000 RPM / 180M TPM | 15,000 RPM / 40M TPM | GPT-5.4 mini is the better throughput lane |

| Snapshot shown on model page | gpt-5.4-mini-2026-03-17 | gpt-5.2-2025-12-11 | GPT-5.4 mini is the current small-model line |

| Main caveat | Not the official frontier successor to GPT-5.2 | No longer the recommended default for new work | If you need the modern frontier lane, use GPT-5.4 |

The most useful takeaway from this table is not that one model is newer. It is that GPT-5.4 mini wins the modern default argument on economics and throughput, while GPT-5.2 no longer wins the default argument at all. It survives mostly as a comparison point and a controlled migration lane.

That is why this keyword confuses people. The names look like a simple upgrade question, but the product roles are now different. One model is the current smaller faster branch. The other is the older previous-frontier branch.

Why this comparison is really a routing question, not a benchmark duel

If you only skim model names, this query looks reasonable: a newer mini model versus an older non-mini model. But OpenAI's current docs do not frame the choice that way.

The timeline makes that easier to see. GPT-5.2 launched on December 11, 2025, while GPT-5.4 mini launched on March 17, 2026. The names are only a few digits apart, but the products now sit in different parts of OpenAI's current lineup.

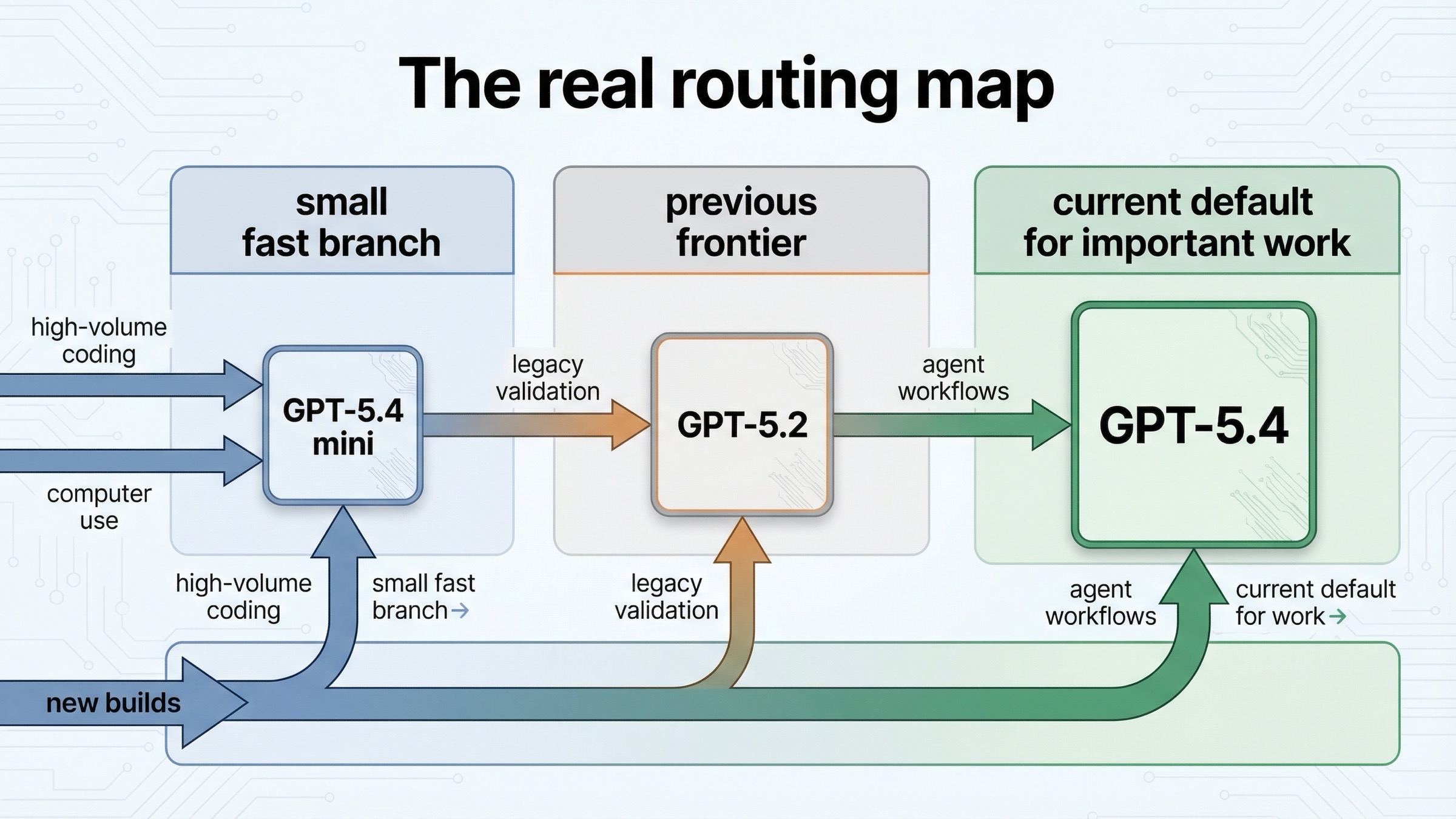

The latest model guide says three things that matter more than any guesswork:

gpt-5.4is now the default for your most important workgpt-5.4replacedgpt-5.2in the APIgpt-5.4-miniis the smaller faster option for high-volume coding, computer use, and agent workflows

That is the whole routing story in one place.

So if you came here thinking GPT-5.4 mini must be the direct successor to GPT-5.2, the official docs say otherwise. GPT-5.4 mini is the small-model branch. GPT-5.2 was the previous frontier branch. The direct successor to GPT-5.2's role is GPT-5.4.

This matters because it changes how you read every other spec row.

For example, many readers will assume the newer mini model must have a fresher cutoff or a larger context window than the older full model. That is not true on the live model pages. Both currently list 400K context and an Aug 31, 2025 knowledge cutoff. That means the decision is not about one model holding more context or knowing later facts. It is mostly about cost, throughput, current product direction, and whether you are choosing a new default or benchmarking a legacy lane.

This is also where many third-party comparison pages become less useful than they first appear. They often summarize official facts correctly, but then they force those facts into a story that sounds more certain than the evidence really is. OpenAI has published clean benchmark tables for GPT-5.4 vs GPT-5.2, and for GPT-5.4 mini vs GPT-5 mini, but not for GPT-5.4 mini vs GPT-5.2. A serious article should say that out loud.

The specs that actually matter here

If you are choosing between these two models for real work, four details matter much more than vague claims about "intelligence."

The first is price. GPT-5.4 mini is dramatically cheaper: $0.75 input / $4.50 output per 1M text tokens, compared with $1.75 input / $14.00 output for GPT-5.2. That difference is large enough to reshape the default choice on its own for many agent products, especially if the model sits inside subagent loops or long-running coding workflows.

The second is published rate limits. GPT-5.4 mini's current model page shows much better throughput ceilings. At Tier 5, GPT-5.4 mini lists 30,000 RPM and 180 million TPM, while GPT-5.2 lists 15,000 RPM and 40 million TPM. Even before you think about model quality, that is a strong signal that OpenAI expects GPT-5.4 mini to carry more high-volume production traffic.

The third is role labeling. GPT-5.4 mini's model page calls it OpenAI's strongest mini model yet for coding, computer use, and subagents. GPT-5.2's model page calls it the previous frontier model for professional work and explicitly recommends using the latest GPT-5.4 instead. That makes GPT-5.2 a weak place to start from if you are designing something new.

The fourth is what is not different. Both models currently show the same context window, the same max output, and the same cutoff. That removes several easy excuses for staying on GPT-5.2. If you are paying more for GPT-5.2, you are not buying later knowledge or more context. You are mostly keeping a previous-frontier lane alive for your own reasons.

One subtle point is worth adding here. GPT-5.4 mini's model page makes its Responses API tool support very explicit: web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, computer use, MCP, and tool search are all marked supported. GPT-5.2's model page does not present the same kind of symmetric tool matrix. That does not prove GPT-5.2 cannot be used in sophisticated workflows. It does tell you where OpenAI is putting its current small-model product emphasis.

If you want a cleaner in-family comparison on the mini side, our guide to GPT-5.4 mini vs GPT-5 mini is the better follow-up. If you want the frontier-lane story, GPT-5.4 vs GPT-5.2 is the more direct sibling article.

When GPT-5.4 mini is the better choice

GPT-5.4 mini is the better choice in the situations that matter most for new products: you need a model that is cheap enough to call often, fast enough to sit inside loops, and current enough to match OpenAI's active product direction.

The clearest case is high-volume coding and agent workflows. OpenAI's own guide points directly at GPT-5.4 mini for high-volume coding, computer use, and agent work that still needs strong reasoning. That already covers a large share of modern API products: coding helpers, internal dev tools, browser or screenshot-based assistants, and multi-step workflow agents where the model is called repeatedly rather than once.

It is also the stronger choice when throughput limits matter. The price difference is big, but the rate-limit difference may matter even more if you are operating at scale. A model that is cheaper and allowed to push far more TPM is easier to build around than a model that costs more while sitting on a lower published ceiling.

GPT-5.4 mini is also the better pick when your team keeps reaching for GPT-5.2 mainly out of habit. The live docs do not support that habit anymore. GPT-5.2 is no longer the default recommendation for new work. If you are starting fresh and your workload fits the small-model lane, GPT-5.4 mini is the model OpenAI is actively steering you toward.

There is one more reason GPT-5.4 mini wins so often here: it does so without giving up context or cutoff versus GPT-5.2. That removes a lot of the usual psychological resistance to choosing a mini model. In this case, "mini" mostly signals lower cost and faster routing, not a smaller context window.

Choose GPT-5.4 mini when most of these are true:

- you are building a new API product in 2026

- you expect high call volume

- you want a model for coding helpers, subagents, or computer-use style workflows

- you care about price and rate limits more than preserving older GPT-5.2 behavior

- you do not specifically need the modern frontier lane, because if you did, you would be looking at GPT-5.4

When GPT-5.2 still makes sense

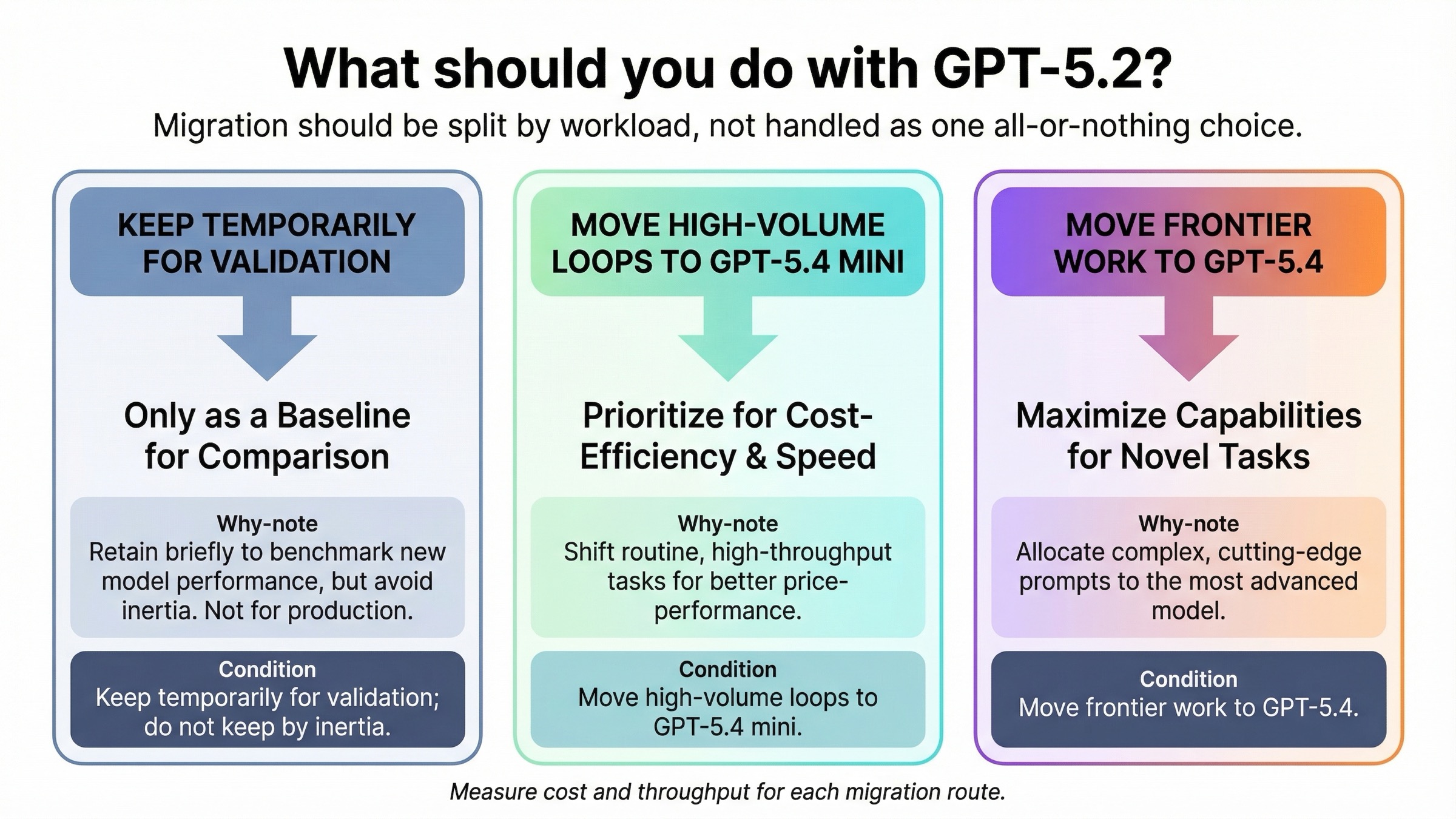

GPT-5.2 still makes sense, but mostly in controlled, temporary, or measurement-heavy situations.

The strongest case is migration validation. If your team already ships a GPT-5.2 route, it can be useful to keep that route alive while you benchmark what changes when you switch. That is not the same as recommending GPT-5.2 as the best default. It means GPT-5.2 still has value as a baseline you already understand.

The second case is behavior continuity. Some teams care less about buying the newest best default and more about not disturbing a production path that is already tuned, monitored, and well understood. In that case, GPT-5.2 can stay in place while you run controlled tests against GPT-5.4 mini and GPT-5.4. That is a rational engineering decision, especially if the model sits behind user-facing workflows where prompt behavior changes create real review costs.

The third case is surface confusion outside the API. OpenAI's current Enterprise and Edu Help Center article still uses GPT-5.2 language to describe the newest ChatGPT workspace experience, while API access remains separate. If your team mixes ChatGPT workspace decisions with API routing decisions, GPT-5.2 can remain part of the live conversation longer than it should. That does not make it the right API default. It just means the naming environment is messy enough that teams may need a deliberate transition period.

There is also a smaller operational point. During the GPT-5.2 rollout, the OpenAI Developer Community logged real endpoint-specific friction around image-input billing behavior. OpenAI support replied that engineering had deployed a fix, but the bigger lesson remains: legacy routes should be tested with real workloads, not trusted because the model name feels familiar. If you are keeping GPT-5.2, do it intentionally and measure it.

So the useful rule is:

- keep GPT-5.2 when you are validating or migrating an older path

- do not keep GPT-5.2 just because it used to be the frontier default

That distinction is the difference between a reasonable temporary choice and a stale long-term one.

The model many readers actually want is GPT-5.4

This is the part many articles dodge because it complicates the keyword. It still needs to be said.

If you are hesitant to choose GPT-5.4 mini because "mini" sounds like a downgrade, then GPT-5.2 is usually not the right escape hatch. The model OpenAI wants you to use for the important-work successor lane is GPT-5.4.

That is the real modern replacement for GPT-5.2's old position in the lineup.

So before you commit to this keyword's binary choice, ask yourself what you are actually trying to preserve:

- If you want cheap, fast, high-volume agentic work, choose GPT-5.4 mini.

- If you want the current frontier default for important work, choose GPT-5.4.

- If you are keeping GPT-5.2, make sure you are doing it for migration or measurement, not because you think it is still the current recommended lane.

That is why the most practical upgrade path is often not "5.2 or 5.4 mini forever?" but rather "5.2 as the legacy baseline, 5.4 mini as the smaller new default, and 5.4 as the frontier destination when the workload justifies it."

If that is the comparison you were really trying to make, read GPT-5.4 vs GPT-5.4 mini after this article. It is the cleaner decision once the legacy baseline question is out of the way.

A practical migration plan from GPT-5.2

If your team already uses GPT-5.2, the right migration is measured rather than ideological.

- Split your workloads before you test. Separate high-volume agent loops, coding-heavy work, and genuinely frontier-style jobs instead of treating "GPT-5.2 traffic" as one blob.

- Test GPT-5.4 mini first on the high-volume lane. That is the lane where the pricing and rate-limit gains are most likely to matter immediately.

- Test GPT-5.4 separately on the frontier lane. Do not force GPT-5.4 mini to answer a question that really belongs to GPT-5.4.

- Measure actual cost, throughput, and failure recovery. Published prices and limits matter, but your real prompt mix matters more.

- Keep GPT-5.2 only as long as it earns its keep. Once it becomes just an inertia lane, remove it.

The point of this plan is simple. You do not need to rip GPT-5.2 out overnight. You do need to stop treating it as the default answer for new work.

Final verdict

For most new builds in March 2026, GPT-5.4 mini is the better choice than GPT-5.2. It is much cheaper, has much higher published rate limits, and sits in the part of OpenAI's current lineup that is explicitly meant for high-volume coding and agent workflows.

GPT-5.2 still matters only when you are measuring, migrating, or preserving a legacy baseline. And if you came in looking for the modern successor to GPT-5.2's frontier lane, the answer is GPT-5.4, not GPT-5.4 mini.

FAQ

Is GPT-5.4 mini cheaper than GPT-5.2?

Yes. As checked on March 21, 2026, OpenAI's model pages list GPT-5.4 mini at $0.75 input / $4.50 output per 1M tokens and GPT-5.2 at $1.75 input / $14.00 output per 1M tokens.

Does GPT-5.4 mini replace GPT-5.2?

Not directly in the frontier lane. OpenAI's current model guide says GPT-5.4 replaced GPT-5.2 in the API, while GPT-5.4 mini is the smaller faster branch for high-volume coding and agent workflows.

Do GPT-5.4 mini and GPT-5.2 have the same context window?

Yes, according to the live model pages checked for this article. Both currently list 400K context, 128K max output, and an Aug 31, 2025 knowledge cutoff.