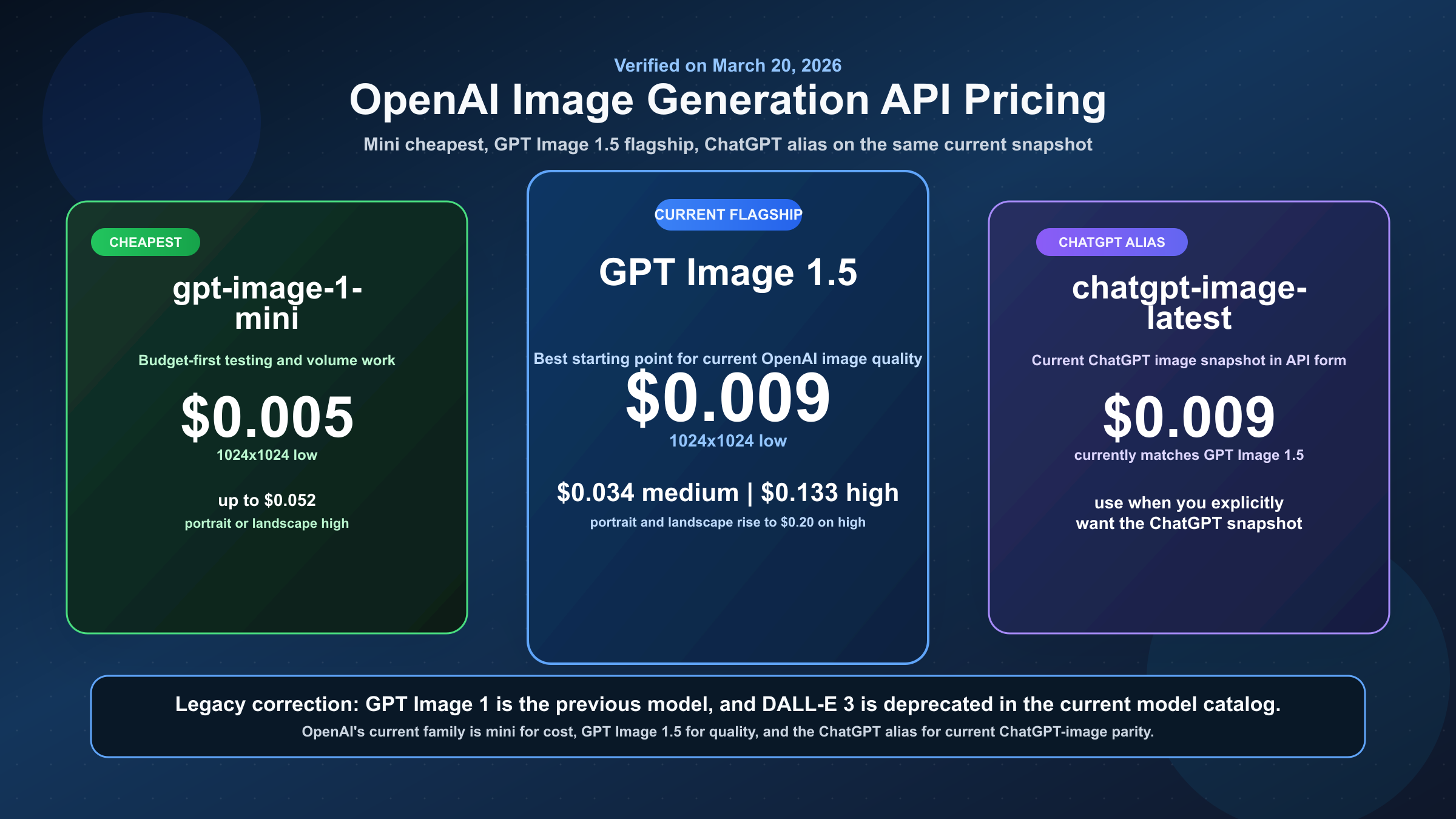

Short answer as of March 20, 2026: OpenAI image generation API pricing currently runs from $0.005 to $0.20 per output image, depending on which model, quality tier, and size you choose. The current flagship lane is GPT Image 1.5. The cheapest current lane is gpt-image-1-mini. The ChatGPT-facing alias chatgpt-image-latest currently matches GPT Image 1.5 pricing. And the most important caveat is that those per-image numbers cover the generated image output, not always the full request bill.

That last point is where most ranking pages still waste the reader's time. A simple image-generation request can be budgeted almost like a clean pay-per-image product. But once you move into longer prompts, image editing, multiple reference images, or higher-fidelity inputs, OpenAI also bills text input and image input tokens. If you budget from the output-image table alone, you can still underestimate what your request actually costs.

This is why the real question under "openai image generation api pricing" is not just "what is the price?" It is "which OpenAI image lane am I paying for, and when does the real request total stop behaving like a simple price-per-image chart?" The current SERP does not answer that well. Official pages are accurate but fragmented across the OpenAI pricing page and the image-generation guide. Calculators are convenient but often stale or incomplete. This guide is designed to bridge that gap.

TL;DR

If you only need the fast answer, use the table below. It is based on the official OpenAI image-generation guide and model pages rechecked on March 20, 2026.

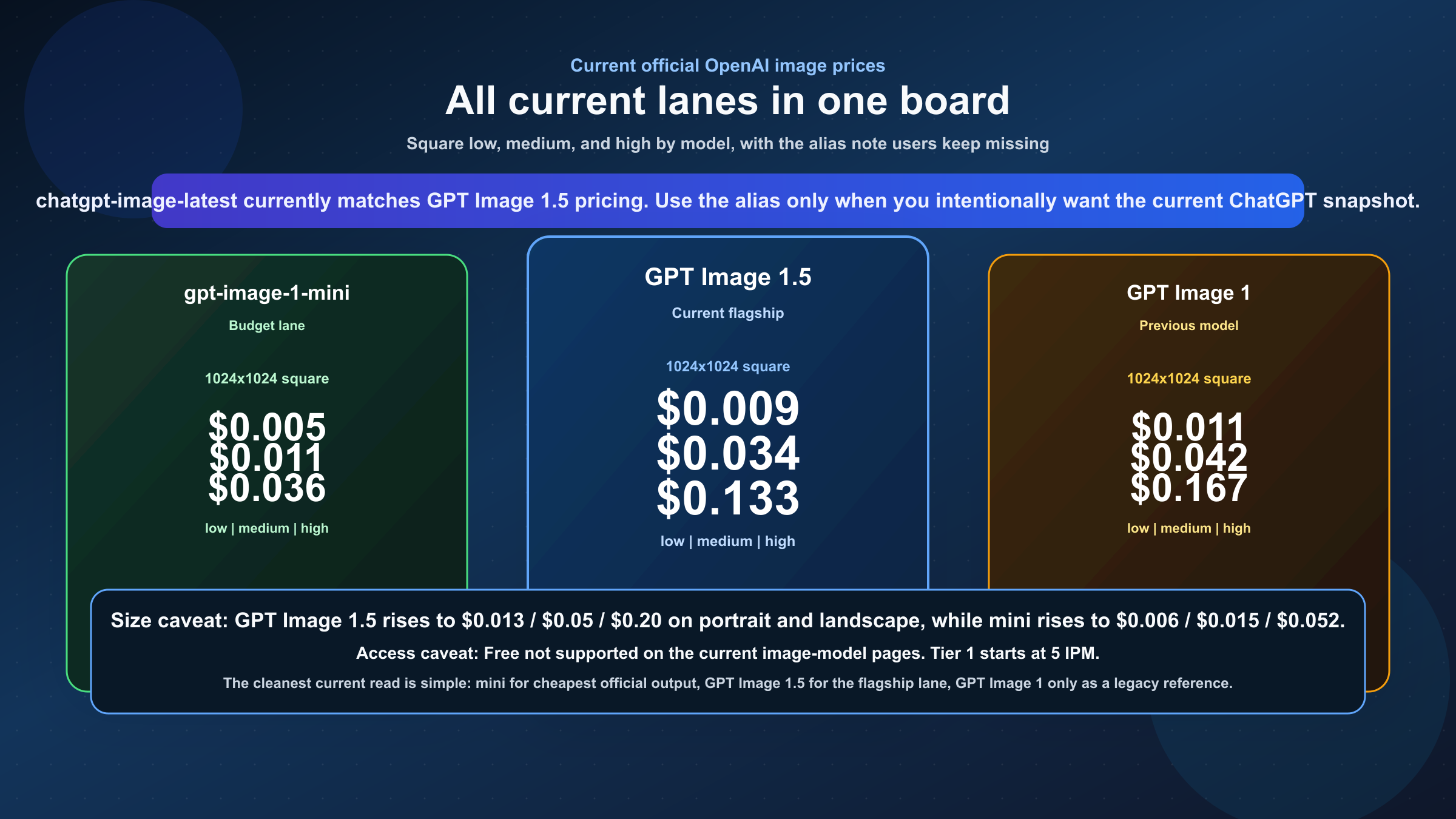

| Model | Current role | 1024x1024 low | 1024x1024 medium | 1024x1024 high | Portrait / landscape | Best fit |

|---|---|---|---|---|---|---|

| GPT Image 1.5 | Current flagship | $0.009 | $0.034 | $0.133 | $0.013 / $0.05 / $0.20 | Best default when prompt following, editing, and overall quality matter most |

gpt-image-1-mini | Current cheapest lane | $0.005 | $0.011 | $0.036 | $0.006 / $0.015 / $0.052 | High-volume, budget-first generation and prototyping |

chatgpt-image-latest | ChatGPT alias | $0.009 | $0.034 | $0.133 | $0.013 / $0.05 / $0.20 | Useful when you specifically want the current ChatGPT image snapshot, but less clean than a stable production model ID |

| GPT Image 1 | Previous model | $0.011 | $0.042 | $0.167 | $0.016 / $0.063 / $0.25 | Legacy compatibility only; no longer the best current default |

Three practical conclusions follow from that table.

First, GPT Image 1.5 is the current OpenAI default. If you find a page that still explains OpenAI image pricing as though GPT Image 1 were the flagship, you are reading a partly stale answer.

Second, gpt-image-1-mini is the real entry point for cost-sensitive API usage. The broad keyword often gets answered with GPT Image 1.5 because it is the flagship, but the cheapest current official price floor is mini, not the flagship.

Third, the table above is not the whole invoice. For simple one-shot generation it is often close enough. For editing or reference-image workflows it is not.

Current OpenAI Image Prices by Model

The most useful way to read OpenAI's current image pricing is to stop thinking in terms of one universal "OpenAI image API price." OpenAI now exposes a small family of image lanes, and each one answers a different budget question.

GPT Image 1.5 is the current top lane. OpenAI's December 16, 2025 release note introduced it as the new image model in ChatGPT and in the API, and the current model catalog now lists it ahead of GPT Image 1. In practice, this is the lane to start with if your output quality, text rendering, and editing reliability matter more than squeezing every last cent out of the request. It is also materially cheaper than GPT Image 1 on the official docs, which is one reason older price screenshots are now misleading.

gpt-image-1-mini is the price floor that broad keyword pages often bury. If your workload is prototype-heavy, volume-heavy, or simply cost-first, mini is the lane you should benchmark before you assume the flagship is necessary. It is not marketed as "mini 1.5," and that detail matters. The docs still position it as a cost-efficient version of GPT Image 1, not as a mini version of GPT Image 1.5. That does not make it bad. It simply means you should treat it as the budget lane on its own terms instead of assuming it inherits every GPT Image 1.5 strength at lower cost.

chatgpt-image-latest exists to expose the image snapshot currently used in ChatGPT. This is where many readers get tripped up. The current alias pricing matches GPT Image 1.5, so at the moment it is fair to say the two have the same visible price ladder. But they are not the same kind of decision. If you want a stable model choice for a long-lived production workflow, gpt-image-1.5 is the cleaner object to reason about. If you specifically want the ChatGPT image snapshot and are comfortable with alias behavior, chatgpt-image-latest is a valid surface.

GPT Image 1 is now the previous lane. It still matters because exact-match search can still show it first, and a lot of older guides still frame it as the flagship. But if you are starting a new build in March 2026, it is not the first pricing page you should anchor on.

There is also a legacy DALL-E layer still visible across the web. OpenAI's current model catalog marks DALL-E 3 and DALL-E 2 as deprecated. That does not mean the entire internet stopped writing about them. It means you should not let a DALL-E-heavy answer become your mental model for current OpenAI image pricing unless you are working on an old migration path.

The practical reading is simple: benchmark GPT Image 1.5 when you need the strongest current OpenAI image lane, benchmark mini when the budget is the first constraint, use chatgpt-image-latest only when you intentionally want the ChatGPT snapshot, and treat GPT Image 1 as a legacy reference rather than a default.

If your actual buying decision is OpenAI versus Google's current image stack rather than only OpenAI pricing, our comparison of Nano Banana 2 vs GPT Image 1.5 is the better next read.

What Actually Gets Billed Beyond the Output Image

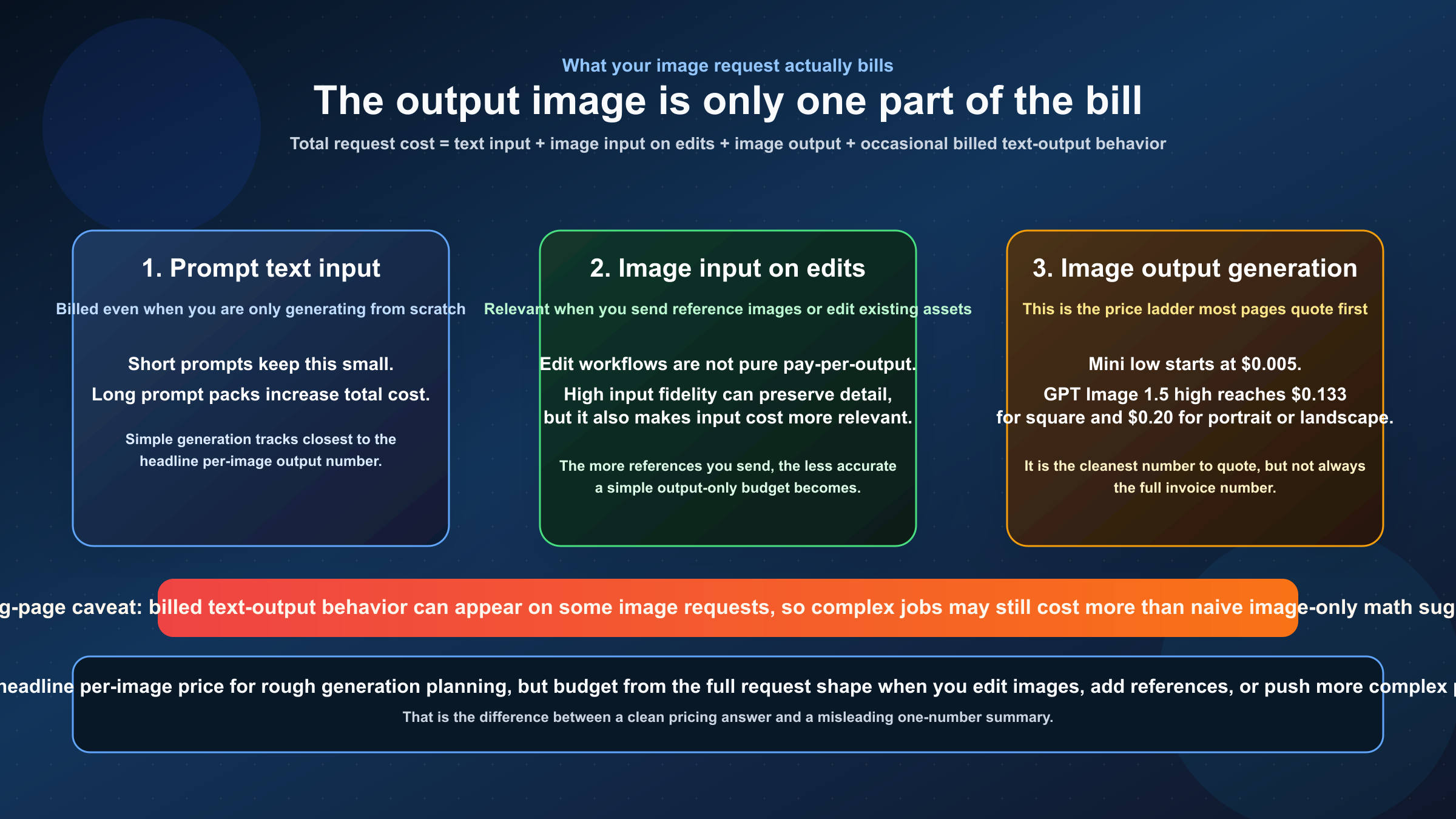

This is the part that weak pricing pages almost always compress too aggressively.

When people quote $0.009 for GPT Image 1.5 low or $0.005 for mini low, they are quoting the output-image generation price from the official image-generation guide. That is useful, but it is not the complete cost model for every request. OpenAI's own guide is explicit that the per-image tables cover the generated image output. The total request cost can also include text input tokens and image input tokens.

In plain English, that means your mental model should look more like this:

texttotal request cost = text input + image input (if editing or using reference images) + image output + some billed text output behavior on certain requests

If you are doing plain generation from a short prompt, the output-image price often dominates and the simple per-image estimate is usually close enough for planning. But the moment you start doing edit workflows, multi-image references, or higher-fidelity input preservation, the request stops looking like a pure pay-per-output-image product.

This matters for two kinds of teams in particular.

The first is the team that edits existing images instead of generating from scratch. OpenAI's guide highlights that GPT Image 1.5 can preserve the first five input images at higher fidelity when input_fidelity is set to high. That is a strong feature if you care about preserving composition, branding, or likeness across edits. It is also exactly the kind of workflow where image input tokens become more meaningful and a naive "one image equals this flat cost" model becomes less accurate.

The second is the team that sees strange billing lines and assumes something is wrong. There is a real developer-friction layer here. Community threads around GPT Image 1.5 billing show that developers do run into image requests with billed text-output behavior in addition to image output. OpenAI's pricing docs also surface that text output can include model reasoning tokens. The safest way to use that information is not to over-dramatize it. The right conclusion is simply this: if your request is more complex than one short prompt to one fresh output, budget from the full request structure, not the output-image chart alone.

That is also why calculators can mislead. A calculator is still useful for rough monthly planning. It is much less reliable as a full source of truth when your workflow involves edits, references, or large prompt payloads. This is one place where the official docs really are better than the prettier summary pages, even if the official docs make you work harder to connect the pieces.

Which OpenAI Image Model Should You Choose?

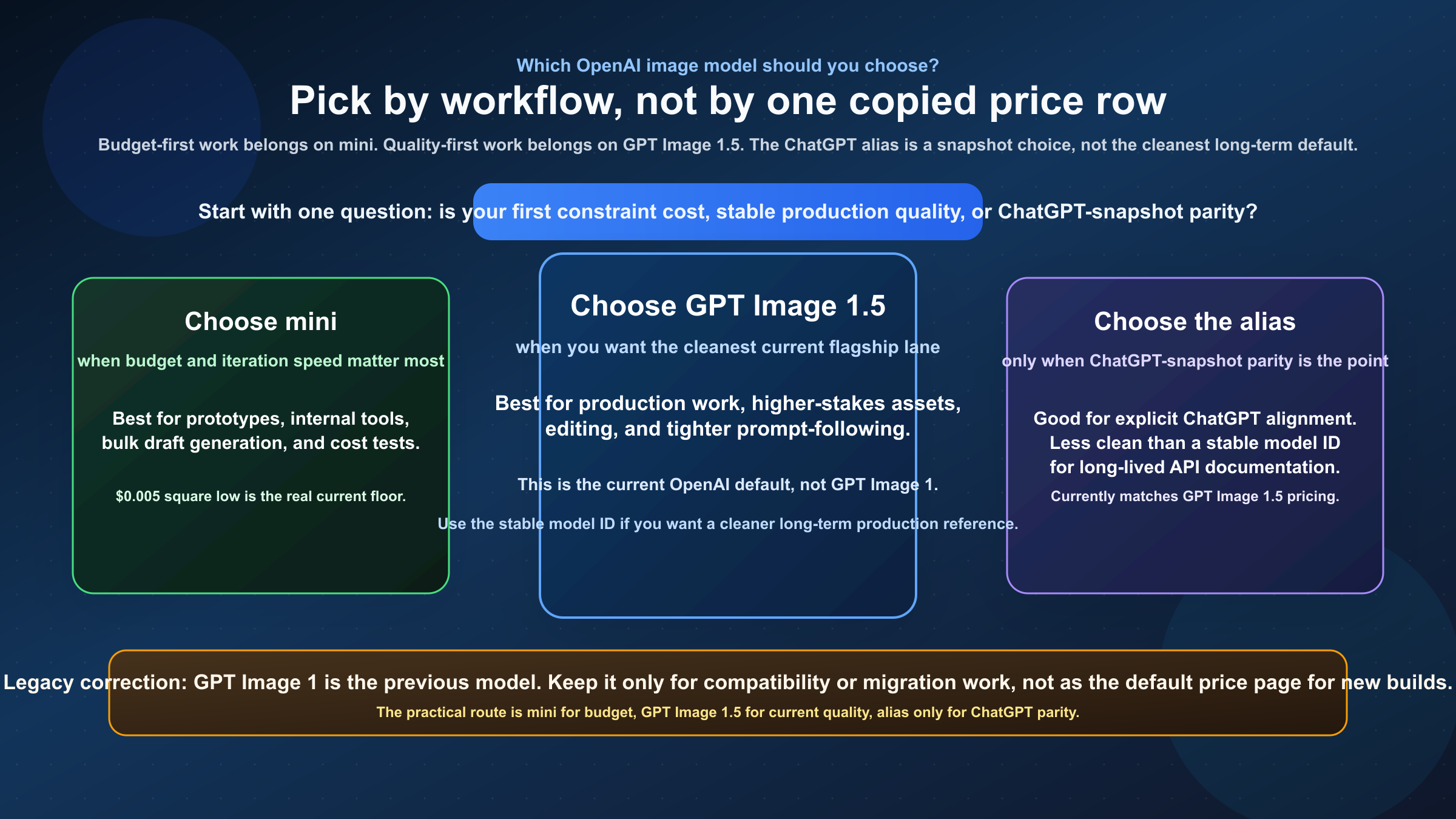

Once the pricing mechanics are clear, the model choice becomes much simpler.

If your goal is the strongest current OpenAI image lane, start with GPT Image 1.5. That is the right default for most production teams who care about quality, editing, and predictable prompt adherence more than shaving every request down to the absolute minimum. OpenAI's own current product framing around GPT Image 1.5 is about stronger editing, better prompt following, and a cleaner flagship lane than GPT Image 1. Those are not abstract benchmark wins. They map directly to real costs: fewer retries, less cleanup, and less time lost deciding whether the model is the old one or the current one.

If your goal is the lowest reasonable official OpenAI image cost, start with gpt-image-1-mini. This is the lane that makes sense for fast iteration, internal prototypes, bulk image generation, and any workload where the premium for the flagship is hard to justify. Mini is also the lane most likely to be underused by readers who only scan GPT Image 1.5 summaries and never pause to benchmark the actual budget option.

If your goal is to align with whatever snapshot ChatGPT is currently using, then chatgpt-image-latest is understandable, but it is usually not the cleanest long-term decision. Aliases are useful precisely because they can move. That is helpful when you want current behavior with minimal mental overhead. It is less helpful when you want a stable production contract that you can document, benchmark, and revisit in six months without wondering whether the alias moved underneath you.

If your goal is legacy compatibility, GPT Image 1 still exists as a reference point. But that should usually sound like a migration decision, not like a new-buy decision.

Use the matrix below as the practical routing answer.

| Situation | Better default | Why |

|---|---|---|

| Cheapest testing and internal prototypes | gpt-image-1-mini | The cost floor is lower, and this is the right place to discover whether the job really needs the flagship |

| Client-facing production work where output quality matters | GPT Image 1.5 | This is the current flagship lane and the safer default when retries and cleanup are expensive |

| Editing existing assets or preserving visual details | GPT Image 1.5 | The feature framing and input-fidelity behavior make the flagship easier to justify on edit-heavy work |

| "I want the same image lane ChatGPT is using right now" | chatgpt-image-latest | Valid if you intentionally want the alias, but usually less clean than choosing a stable model ID |

| Old workflows still pinned to previous pricing assumptions | Re-check before keeping GPT Image 1 | GPT Image 1 is now the previous model, so treat it as a legacy lane, not the default assumption |

A lot of teams overcomplicate this. The clean version is: start mini if cost is the first question, start GPT Image 1.5 if output quality is the first question, and only use chatgpt-image-latest when you actually mean the ChatGPT snapshot.

For rough planning, the monthly math gets concrete very quickly. 1,000 square outputs come to roughly $5 on mini low, $11 on mini medium, $34 on GPT Image 1.5 medium, and $133 on GPT Image 1.5 high before prompt and edit-path costs. At 10,000 square outputs, those same lanes become about $50, $110, $340, and $1,330. That is why the flagship-versus-mini decision should be made at the workflow level, not as an afterthought.

Why Older OpenAI Image Pricing Pages Still Disagree

The disagreement across search results is not random. It comes from a real product transition.

On December 16, 2025, OpenAI introduced the new Images model in ChatGPT and said it was available in the API as GPT Image 1.5. The same release note said image inputs and outputs were 20% cheaper in GPT Image 1.5 than in GPT Image 1. That means any page built around the old GPT Image 1 cost ladder without a freshness update is now only partially right at best.

This is why the exact query produces such an awkward first page. Official model cards rank because they are precise and current, but they answer one slice at a time. GPT Image 1 still ranks because it is official and extractable, even though it now describes the previous lane. GPT Image 1.5 ranks because it is current. The ChatGPT alias page ranks because the market mixes ChatGPT image behavior with API behavior. Then calculators and gateway pages squeeze into the same field because users clearly want one total answer instead of a pile of model cards.

That fragmentation also explains why stale claims survive longer than they should. A calculator page can keep ranking even if one sentence in it is outdated. During research for this article, one visible calculator page was still pushing a free credits for new users framing for image usage, while the current official image model pages show Free not supported and start the current image lanes at Tier 1 with 5 IPM. The page still ranks because it is useful in structure, not because every line in it is current.

So when you see pricing disagreement in this topic, do not assume OpenAI has some mysterious hidden price grid. In most cases one of three things is happening:

- the page is talking about a different model lane

- the page is quoting output-image pricing but not full request pricing

- the page was written before GPT Image 1.5 became the current flagship

Once you separate those three cases, the pricing picture becomes much less chaotic.

Access Tiers, Batch Pricing, and Other Planning Caveats

There are three final caveats worth keeping in your mental model before you set a budget or promise a product team a certain throughput number.

The first is access. On the current official model pages, OpenAI's image-generation lanes show Free not supported. The current GPT Image 1.5 and alias pages list Tier 1 at 100,000 TPM and 5 IPM, then 20 IPM at Tier 2, 50 IPM at Tier 3, 150 IPM at Tier 4, and 250 IPM at Tier 5. In other words, this is not a surface you should plan to test indefinitely from a true free account. If your actual blocker is access rather than pricing, start with how to get an OpenAI API key and the current OpenAI API key requirements before you keep debugging image requests.

The second is Batch pricing. OpenAI's pricing page currently halves the token rates for GPT Image 1.5 and mini in Batch. For pure generation workloads, that effectively means the output-image component is roughly halved as well. A background run that would land around $340 in standard output-only pricing for 10,000 GPT Image 1.5 medium square outputs moves closer to $170 in Batch before prompt and edit-path costs. That can be a real savings lever if you are running non-interactive jobs. It is not a magic discount for every use case. If your application needs immediate user-facing results, Batch is the wrong answer no matter how nice the discount looks in a spreadsheet.

The third is surface confusion. ChatGPT subscription pricing is not API pricing. A ChatGPT plan can change what image generation you get in ChatGPT itself, but it does not replace the API billing model. The broad search term often attracts readers who are actually comparing app behavior, API behavior, and third-party routing all at once. For production work, keep those surfaces separate or your budget will get sloppy fast.

If you are already funded but still hitting quota or tier errors, our guide to the OpenAI API key quota exceeded error is the better next step than continuing to stare at the pricing page alone.

FAQ

Is GPT Image 1.5 the current OpenAI image flagship?

Yes. As checked on March 20, 2026, OpenAI's current model catalog lists GPT Image 1.5 as the main current image model and labels GPT Image 1 as the previous image generation model.

What is the cheapest current OpenAI image API price?

The cheapest current official floor is gpt-image-1-mini low at $0.005 for a 1024x1024 output image. GPT Image 1.5 starts at $0.009 for the same low square output.

Does chatgpt-image-latest have different pricing from GPT Image 1.5?

Not at the moment. The current official alias page matches GPT Image 1.5 pricing. But it is still useful to think of it as a ChatGPT alias rather than the cleanest stable model choice for a long-lived API workflow.

Why does my invoice look higher than the per-image output chart?

Because the visible per-image tables are mainly about output generation. Text input tokens, image input tokens on edits, and some billed text-output behavior can raise the total request cost above the output-only number.

Is there a free tier for OpenAI image generation API?

The current official image model pages show Free not supported for GPT Image 1.5, mini, and the ChatGPT alias. For current image generation, treat paid Tier 1 access as the real starting point.

Should I still use GPT Image 1 or DALL-E 3?

Only if you have a specific migration or compatibility reason. For a fresh 2026 pricing decision, GPT Image 1.5 and mini are the lanes that matter most.