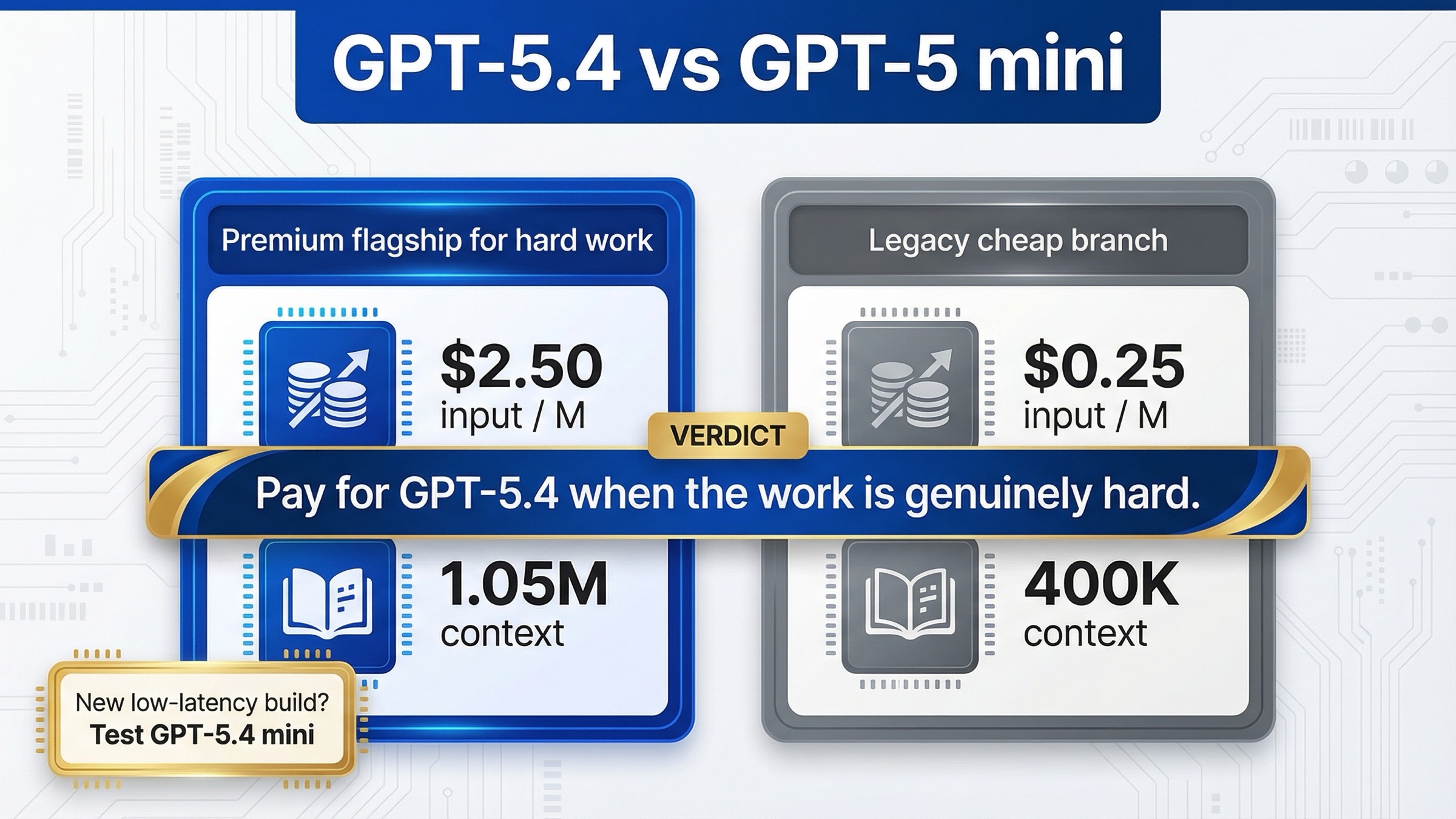

As of March 20, 2026, GPT-5.4 is the better default for serious coding, agent, and professional API work, while GPT-5 mini is only the right choice when the workload is stable, tool-light, and so cost-sensitive that the price gap matters more than the capability gap. That is the short answer. GPT-5.4 launched on March 5, 2026 as OpenAI's current flagship line for professional work, while the current GPT-5 mini model page still presents it as the cheaper low-latency branch but no longer treats it as the obvious forward-looking default.

The important catch is that this is not a clean "premium model versus current mini model" matchup anymore. The current GPT-5 mini model page now says that for most new low-latency, high-volume workloads, OpenAI recommends starting with gpt-5.4 mini instead. So the real job of this article is not to pretend GPT-5 mini is the modern cheap default. It is to explain when GPT-5.4 is worth paying for, when GPT-5 mini is still rational, and when your comparison should really move to GPT-5.4 mini vs GPT-5 mini.

TL;DR

| Model | Best for | Why you pick it | Main tradeoff |

|---|---|---|---|

| GPT-5.4 | Hard coding tasks, long-context repo work, richer agents, higher-stakes outputs | 1,050,000 context, Aug 31, 2025 cutoff, much broader current tool surface, and stronger official benchmark profile | Very expensive: $2.50 input / $15 output per 1M tokens |

| GPT-5 mini | Stable cost-first text pipelines, simpler structured generation, legacy budget routing | Much cheaper: $0.25 input / $2.00 output per 1M tokens | Older branch, 400,000 context, May 31, 2024 cutoff, and a much thinner tool surface |

If you want one practical rule, use this one: choose GPT-5.4 when failure is expensive, retries are painful, or long context and richer tools change the product. Keep GPT-5 mini only when the job is narrow enough that you would mostly be paying extra for headroom you will not use.

The second rule matters almost as much: if your real goal is a cheaper default for new low-latency work, do not stop here. The current docs point most new small-model routing toward GPT-5.4 mini, not GPT-5 mini.

Why This Comparison Is Harder Than It Looks

Many comparison pages make this keyword sound simpler than it is. They frame it like a normal flagship-versus-mini choice, then stop after listing token prices and context windows. That misses the real product situation.

GPT-5.4 and GPT-5 mini are both live models, but they do not occupy the same place in the current lineup. On the official GPT-5.4 model page, GPT-5.4 is positioned as the default frontier model for agentic, coding, and professional workflows. On the official GPT-5 mini model page, GPT-5 mini is positioned as a faster, more cost-efficient version of GPT-5 for well-defined tasks and precise prompts, but the same page also tells developers to start most new low-latency, high-volume builds with GPT-5.4 mini instead.

That changes the shape of the buying decision. If you are choosing between GPT-5.4 and GPT-5 mini, you are usually doing one of three things:

You are deciding whether a premium flagship is worth paying for over a cheaper legacy branch. Or you are defending an existing GPT-5 mini deployment and deciding whether to keep it. Or you are starting fresh, seeing the old cheaper option still exists, and wondering whether you should skip it entirely and move to the newer mini line.

This is also why the current SERP still feels thin. The official docs own the raw facts, but they do not fully answer the practical question a team lead has to answer in a planning meeting: should we pay for GPT-5.4, keep GPT-5 mini, or move the cheap path to GPT-5.4 mini instead?

Pricing, Context Window, and Tool Support Side by Side

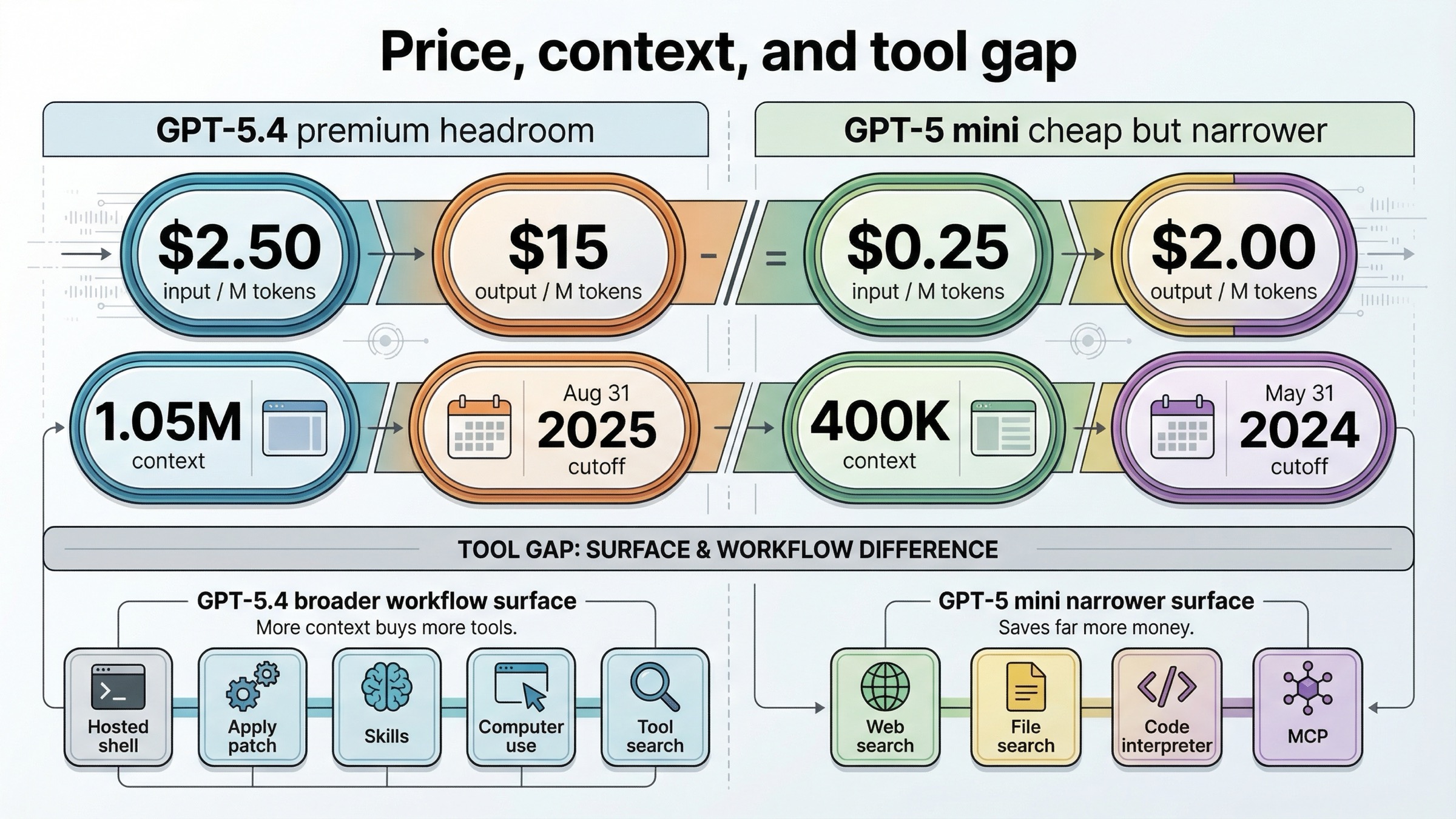

The price gap is not subtle. According to the current GPT-5.4 model page, GPT-5.4 costs $2.50 per 1M input tokens, $0.25 per 1M cached input tokens, and $15.00 per 1M output tokens. According to the current GPT-5 mini model page, GPT-5 mini costs $0.25 per 1M input tokens, $0.025 per 1M cached input tokens, and $2.00 per 1M output tokens.

That means GPT-5.4 is currently 10x the input price, 10x the cached-input price, and 7.5x the output price of GPT-5 mini. If your workload is dominated by huge volumes of simple prompts, that difference can absolutely outweigh the quality gain. This is the single strongest argument for keeping GPT-5 mini alive in a production architecture.

But the capability gap is also bigger than most cheap-versus-premium summaries admit. GPT-5.4 currently exposes 1,050,000 context with 128,000 max output tokens and an Aug 31, 2025 knowledge cutoff. GPT-5 mini currently exposes 400,000 context, the same 128,000 max output, and a much older May 31, 2024 knowledge cutoff. That is not a tiny recency difference. If your prompts touch newer libraries, 2025-era API changes, or large document and repo contexts, GPT-5.4 starts with a real advantage before prompting strategy even enters the picture.

The tool story matters even more. GPT-5.4 currently supports web search, file search, image generation, code interpreter, hosted shell, apply patch, skills, computer use, MCP, tool search, and distillation on the model page. GPT-5 mini keeps web search, file search, code interpreter, and MCP, but it does not currently list image generation, hosted shell, apply patch, skills, computer use, tool search, or distillation support.

That means the comparison is not just "better model versus cheaper model." It is also "richer workflow surface versus narrower workflow surface." If your system ever needs hosted shell, patch application, broad agent tooling, or browser-style computer use, the price gap is no longer the only number that matters. GPT-5.4 lets you build a different kind of system.

Another detail many quick comparison pages miss is model age. The current GPT-5.4 snapshot shown on the model page is gpt-5.4-2026-03-05, while the current GPT-5 mini snapshot shown on the model page is gpt-5-mini-2025-08-07. Even before you compare cutoffs, that snapshot difference tells you which branch is currently receiving the flagship product attention.

What The Official Positioning And Benchmarks Actually Say

The official positioning is surprisingly clear if you read the current launch and routing pages together.

On the March 5, 2026 GPT-5.4 launch page, OpenAI calls GPT-5.4 its most capable and efficient frontier model for professional work. The page says GPT-5.4 brings together reasoning, coding, and agentic workflows in a single model, adds native computer-use capability for general-purpose work, supports up to 1M tokens of context, and improves tool search across larger ecosystems of tools and connectors. That is not the language of a niche premium upgrade. It is the language of a flagship default.

Then the March 17, 2026 GPT-5.4 mini and nano launch page explains the smaller-branch philosophy. It says GPT-5.4 mini improves over GPT-5 mini across coding, reasoning, multimodal understanding, and tool use while running more than 2x faster. It also explicitly describes a modern routing pattern: a larger model like GPT-5.4 handles planning, coordination, and final judgment, while GPT-5.4 mini handles narrower supporting tasks in parallel.

That framing tells you something important about GPT-5 mini. It is still available, but it is no longer the model OpenAI is using to describe the future small-model architecture. GPT-5.4 mini is.

The current latest-model guide sharpens that picture further. It treats GPT-5.4 with none reasoning as a GPT-4.1 replacement and says GPT-5.4 mini is a great replacement for o4-mini or gpt-4.1-mini. In other words, the current official migration map is pointing developers toward the GPT-5.4 line even when they want a cheaper or lower-latency branch.

That does not make GPT-5 mini useless. It does mean you should think of it as a live legacy budget option rather than the obvious modern entry point for new builds.

The most useful official benchmark table for this keyword is not on the GPT-5.4 launch page. It is on the March 17 GPT-5.4 mini launch page, because that table compares GPT-5.4, GPT-5.4 mini, GPT-5.4 nano, and GPT-5 mini in one place.

Here are the numbers that matter for this article:

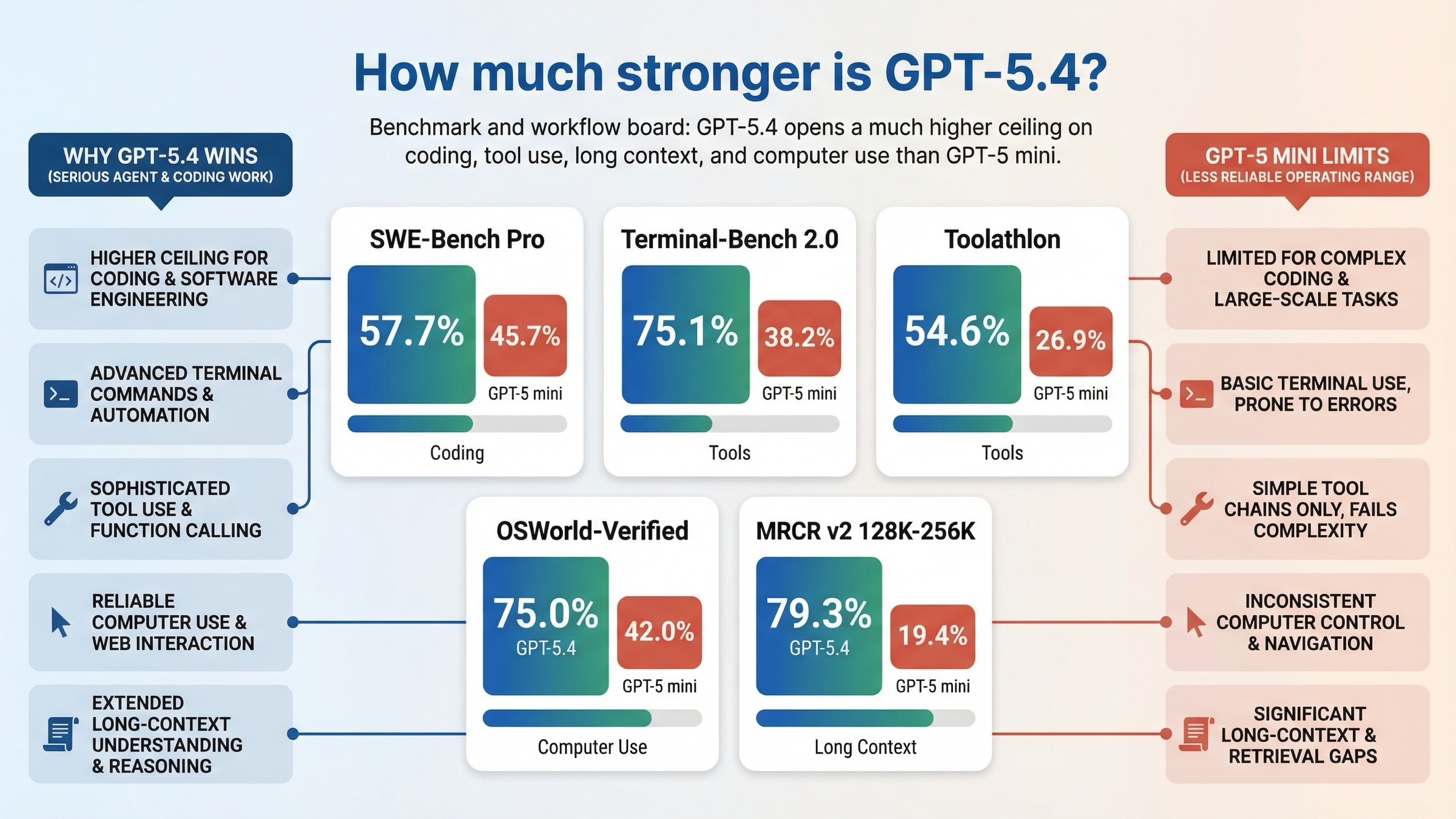

- SWE-Bench Pro (Public): GPT-5.4 scored 57.7% versus 45.7% for GPT-5 mini.

- Terminal-Bench 2.0: GPT-5.4 scored 75.1% versus 38.2% for GPT-5 mini.

- Toolathlon: GPT-5.4 scored 54.6% versus 26.9% for GPT-5 mini.

- GPQA Diamond: GPT-5.4 scored 93.0% versus 81.6% for GPT-5 mini.

- OSWorld-Verified: GPT-5.4 scored 75.0% versus 42.0% for GPT-5 mini.

- OpenAI MRCR v2 8-needle 128K-256K: GPT-5.4 scored 79.3% versus 19.4% for GPT-5 mini.

Those are not tiny wins that only matter in benchmark blog posts. They point to real product-level differences.

The biggest practical delta is not prose quality. It is operating range. GPT-5.4 is much stronger on long-context retrieval, much stronger on terminal-style work, much stronger on tool use, and dramatically stronger on the sort of multimodal computer-use workloads that depend on screenshots and UI interpretation. If your system behaves anything like a coding agent, a repo assistant, a browser operator, or a document-heavy professional copilot, these differences are large enough to change user experience, not just leaderboard bragging rights.

There is one benchmark caveat worth keeping visible. The same OpenAI table notes that the highest reasoning_effort available for GPT-5 mini in that comparison is high, while GPT-5.4 was evaluated at xhigh. So this is a product comparison using the currently exposed best settings, not a perfect same-knob lab bakeoff. That still makes it useful for buyers, because buyers use the product as it actually exists. It just means you should interpret the table as "current shipped capability and settings," not "architecture-only purity test."

The important conclusion is simple: the benchmark gap is big enough that you should not treat GPT-5.4 as a luxury upgrade if your work depends on coding, tools, long context, or UI-heavy tasks.

When GPT-5.4 Is Worth Paying For

GPT-5.4 is worth the premium when the cost of a weaker model is not just worse answers. It is more retries, more workflow glue, more guardrails, more prompt recovery, and more human cleanup.

The first clear case is long-context work. If you run large repo prompts, long document bundles, or multi-file workflows where the model has to keep state across a genuinely large working set, the jump from 400,000 context to 1,050,000 context is not cosmetic. It changes which prompts fit at all, and it reduces the need for manual chunking and orchestration. That is one reason GPT-5.4 is the better fit for repo-scale work and large professional deliverables.

The second clear case is agent tooling. GPT-5.4's current tool surface includes hosted shell, apply patch, skills, computer use, and tool search. GPT-5 mini does not. If your product plan includes real delegation, environment manipulation, code patching, or large tool inventories, GPT-5.4 gives you headroom that GPT-5 mini simply does not advertise today.

The third case is higher-stakes professional output. On the GPT-5.4 launch page, OpenAI leans hard into spreadsheet, presentation, document, and professional knowledge-work performance. If the cost of a mediocre answer is analyst time, legal-review time, engineering time, or user trust, the model premium often becomes easier to justify than it looks from token math alone.

The fourth case is computer use and visual reasoning. GPT-5.4 is explicitly described as OpenAI's first general-purpose model with native state-of-the-art computer-use capability. If your system interprets screenshots, navigates interfaces, or works across real software environments, the benchmark and product framing both point toward GPT-5.4.

Put bluntly, GPT-5.4 is worth paying for when you would otherwise spend engineering time compensating for what the cheaper model does not do as well.

If you are also comparing other premium OpenAI routes, it is worth reading GPT-5.4 vs GPT-5.3-Codex and GPT-5.4 vs GPT-5.4 mini after this one. Those two pages answer the "best premium default" and "best modern cheaper branch" follow-up questions more directly.

When GPT-5 mini Still Makes Sense

GPT-5 mini still makes sense when your workload is narrow enough that GPT-5.4's extra headroom would mostly turn into a bigger bill.

The strongest case is a mature, high-volume text pipeline. If your prompts are stable, your outputs are well defined, your tool usage is shallow, and the business is already happy with the results, the 10x input-price gap matters more than benchmark bragging rights. A cheap model that is already "good enough" can still be the right business decision.

The second case is simple structured generation. If the job is mostly routing, labeling, light extraction, or controlled text production, GPT-5 mini can still be a rational cheap branch, especially if you already know how it behaves in production. In that situation, moving everything to GPT-5.4 just because it is better on terminal and long-context evals may be wasteful.

The third case is dual-route architectures where the heavy path already exists somewhere else. If you already use a stronger model for the complicated work and want the cheapest still-acceptable OpenAI path for the easy work, GPT-5 mini may remain useful as the low-cost lane.

There is also a practical migration reason to keep it for a while: live systems do not migrate instantly just because the vendor has a newer recommendation. If GPT-5 mini is already deployed, already measured, and already acceptable, you do not need to rip it out blindly. You need to benchmark the places where it actually hurts you.

The key is to be honest about what you are keeping it for. GPT-5 mini is a good answer when the system is already narrow and cost-first. It is a weak answer when the workload is drifting toward bigger context, richer tools, modern subagents, or screenshot-heavy tasks.

When You Should Stop And Test GPT-5.4 mini Instead

This is the section many comparison pages should have written in the first place.

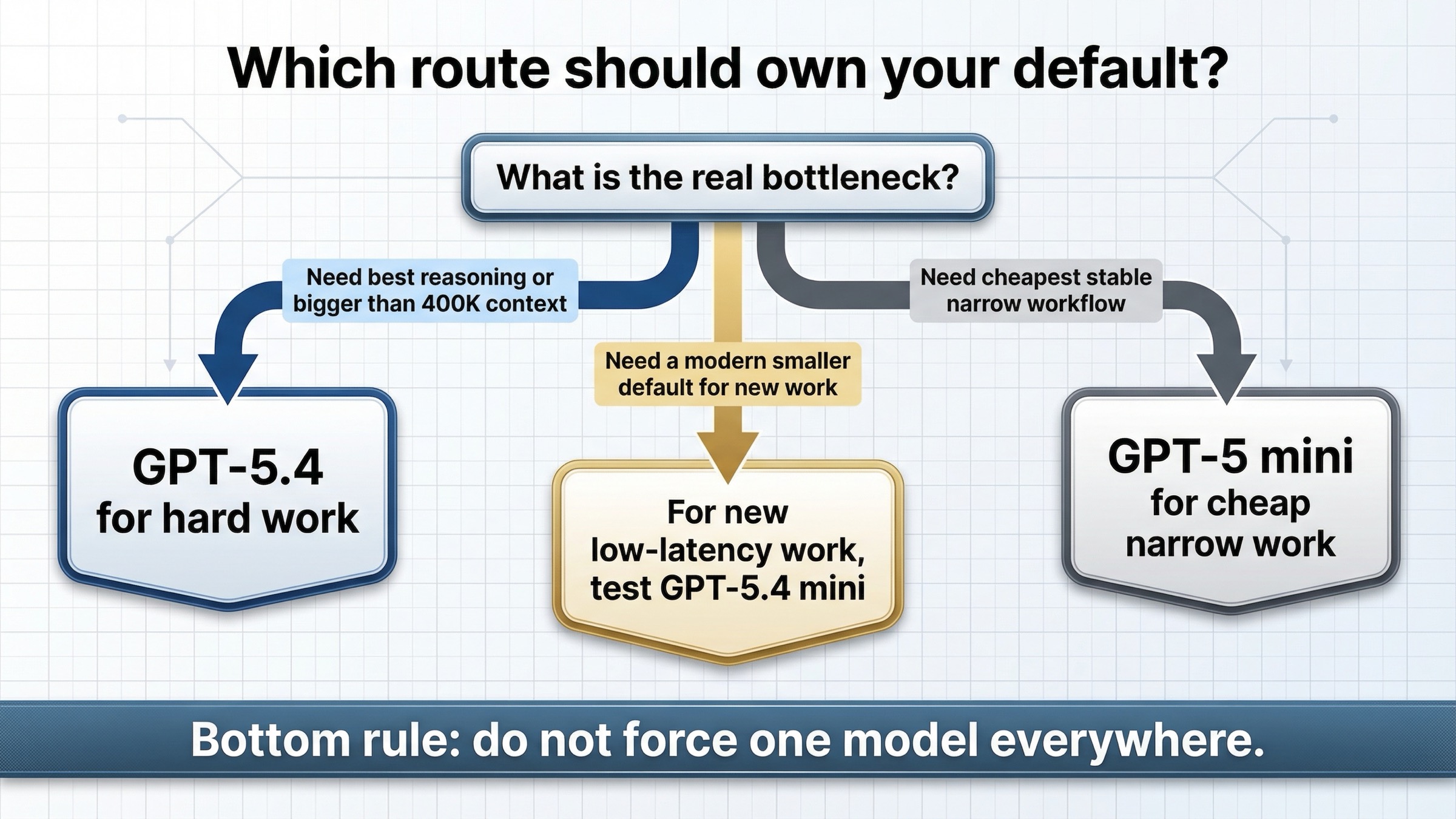

If you arrived here because you want something cheaper than GPT-5.4 for a new system, the current docs do not point you toward GPT-5 mini first. They point you toward GPT-5.4 mini.

That matters most in four situations.

The first is new low-latency product work. The current GPT-5 mini model page itself says most new low-latency, high-volume workloads should start with GPT-5.4 mini. So if you are not preserving a legacy deployment and you just want a smaller modern default, the better next read is GPT-5.4 mini vs GPT-5 mini.

The second is subagent architecture. The March 17 launch post explicitly describes GPT-5.4 as the larger planning and final-judgment model while GPT-5.4 mini handles narrower tasks in parallel. That is a much more modern use of the lineup than "premium model versus cheapest old mini." If your system uses specialist workers, delegated searches, or coding subtasks, GPT-5.4 mini is the model OpenAI is actually naming in that pattern.

The third is migration from older cheap reasoning routes like o4-mini or GPT-4.1 mini. The current latest-model guide tells you that GPT-5.4 mini is a great replacement for those routes. GPT-5 mini is not the migration story the official docs are pushing.

The fourth is Codex-style usage. In the March 17 launch post, OpenAI says GPT-5.4 mini is available across the Codex app, CLI, IDE extension, and web, and that it uses only 30% of GPT-5.4 quota in Codex. That is a strong signal that the modern cheap branch for coding assistance is no longer GPT-5 mini.

So if your real question is "what is the smaller modern model I should pair with GPT-5.4," the answer is usually not GPT-5 mini. It is GPT-5.4 mini.

API and Codex Guidance vs ChatGPT Surface Reality

This query attracts a lot of confusion because users see "mini" in multiple places and assume the ChatGPT picker, Help Center, and API model pages all describe the same thing in the same way.

They do not.

According to the March 18, 2026 Model Release Notes, GPT-5.4 mini is rolling out in ChatGPT for Free and Go users via the Thinking feature in the plus menu. For many paid users it shows up as a fallback for GPT-5.4 Thinking when rate limits are reached, and the note explicitly says GPT-5.4 mini does not appear as a normal selectable model in the picker.

That is a very different question from the API decision in this article. If you are choosing an API model, the model pages and current latest-model guide should carry more weight than the app picker. If you are choosing a ChatGPT plan or trying to understand visible app behavior, the Help Center release notes matter more.

The practical rule is simple: do not let ChatGPT naming confusion distort an API architecture decision.

Practical Routing Rules for Teams

If you need a team-facing recommendation table, use this one:

| Workload | Default pick | Why | What to test before overriding |

|---|---|---|---|

| Long-context repo or document work | GPT-5.4 | 1,050,000 context and much stronger long-context benchmark profile | Whether your prompt stack really uses more than 400K effectively |

| Tool-heavy coding or agent workflows | GPT-5.4 | Broader current tool surface and much better tool-use and terminal benchmarks | Whether the workflow can be split so GPT-5.4 mini handles narrower subtasks |

| Stable budget-first text generation | GPT-5 mini | 10x cheaper input and 7.5x cheaper output | Whether GPT-5.4 mini gives enough better latency or quality for acceptable extra cost |

| New low-latency, high-volume build | GPT-5.4 mini first, then GPT-5.4 if needed | Current official routing points new small-model work toward GPT-5.4 mini | Whether you actually need flagship context or tool depth |

| Mixed architecture with one premium lane and one cheap lane | GPT-5.4 + GPT-5.4 mini | Matches the current official product tree better than GPT-5.4 + GPT-5 mini | Whether a legacy GPT-5 mini branch still earns its keep on measured cost |

If your team wants one short decision tree, use this:

Start with GPT-5.4 when the hard case defines the product. Keep GPT-5 mini only when the cheap case defines the product. If neither is true and your real objective is a smaller modern default, route the evaluation toward GPT-5.4 mini instead of treating GPT-5 mini as the future.

If you are still deciding how to structure a general OpenAI API stack, our OpenAI API key guide and OpenAI API key free 2025 guide cover the setup side, while this page is the model-routing side.

FAQ

Is GPT-5 mini deprecated?

No. As of March 20, 2026, GPT-5 mini still has a current model page and current pricing. The point is not that it disappeared. The point is that it is no longer the model OpenAI is implicitly treating as the forward-looking cheap default for most new low-latency builds.

Is GPT-5.4 always worth the extra cost?

No. If the workload is narrow, stable, and cheap-text-first, GPT-5.4 can easily be overkill. The reason to pay for it is not status. It is that larger context, richer tools, and better high-end benchmark behavior reduce retries and architecture compromises on hard work.

What is the biggest practical difference between GPT-5.4 and GPT-5 mini?

The biggest practical difference is not a single benchmark number. It is the combination of much larger context, a newer cutoff, a much broader current tool surface, and a flagship product role built around professional and agentic work. That is a materially different operating range.

When should I compare GPT-5.4 against GPT-5.4 mini instead?

When your real goal is to choose a current premium lane and a current smaller lane for new work. If you are starting fresh in 2026 and want a cheaper modern route, GPT-5.4 mini is usually the more relevant comparison than GPT-5 mini.

What if I already run GPT-5 mini in production?

Do not migrate blindly. Benchmark the exact places where quality, tools, context, or reliability cost you money today. If the gains are small and the spend increase is large, keeping GPT-5 mini can still be rational. If the system is drifting toward agents, richer tool use, or larger contexts, the migration case gets much stronger.

The shortest honest conclusion is this: GPT-5.4 is the right model when the work is genuinely hard, GPT-5 mini is the right model when the work is genuinely cheap, and GPT-5.4 mini is the model many teams should test when they want the modern middle path.